No products in the cart.

Digital Marketing, SEO

6 On-Site Issues That Cause Your Rankings To Drop

On-site issues are the most common areas that causes a delay in getting top 10 rankings in Google. If you are planning to start a campaign or already doing it, make sure that your website is free from these errors. Here are few common on-site issues that I have encountered and I think have a serious negative impact on rankings. We regularly work with businesses that were not able to rank for a very long time because of such issues.

I am providing some few tips for you to be able to fix each issue. However, you can skip them and check any assistance you need online. Check out my free quick analysis to be sure your website is free from these errors and warnings. Best of luck!

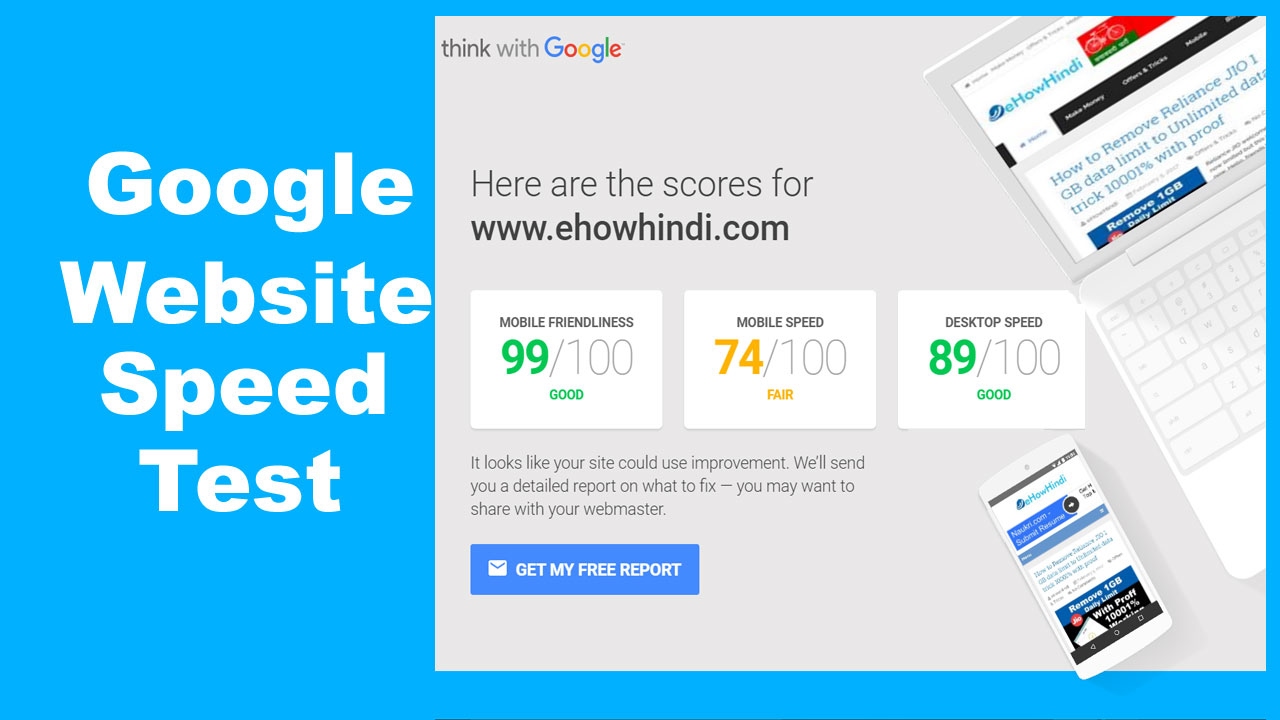

1. PAGE SPEED

The rank of your website on SERPs rely a lot on your website loading speed. The faster your site is, the better USER experience (i.e. reduces your bounce rate), slower websites are most likely to drop on rankings. Google reduces the number crawlers sent your website weekly if the server response is more than 2 seconds. In return, fewer pages will be crawled and indexed.

In 2018, Google announce that:

“Although speed has been use in ranking signal, it focused on desktop searches. Today we’re announcing that starting in July 2018, page speed will be a ranking factor for mobile searches.”

Fixing this issue: There are several steps you can improve your website speed. Minifying JS and CSS files, making CSS code more efficient, optimizing images, icons and graphics, a fine-tuning browser caching plugin are just a few things you can add on your to-do-list. With WordPress platform built website, there are plugins like Autoptimize, Hummingbird, etc that can help improve your website speed. It may help to regularly use a page speed analysis tool like GTmetrix to optimize and improve your page speed.

2. THIN CONTENT ON SITE

Thin content are pages with low word count. With your excitement to see your website up and running, you may have a ton of pages with lesser word count. On the other side of that spectrum, you might consider a few pages but with heavy,long and windy content. When Google crawls and rank a website, it will not just look at your content for just a specific keywords but also for related keywords or the latency of your keywords.

It is a red flag to search engines for website pages with few to no content at all. Websites with many pages containing an in-depth and interesting content usually make the short cut to the top-ranked websites.

Fixing the Issue:

Base on experience, there is no other solution to this except creating interesting content with proper preparation. Choose a topic that you can create an in-depth and interesting well-crafted content. Focus on keywords within your headings. Long-tail keywords can be used within the content. Include sub-headings and bullet points to highlight some important parts. Never skimp on word count – but never “stuff” your keywords either.

3. DUPLICATE CONTENT

Google’s algorithmic crawlers hate duplicate content! Non-unique content doesn’t just drop rankings down – Google may even force to penalize you by sand-boxing your website.

Fixing the Issue:

There’s no alternative for fixing duplicate content issues but creating and hosting unique content. Check your website content by using tools like CopyScape and SiteLiner to work on and remove duplicate or copied content. In an instant that you have duplicate/similar content on multiple pages or websites, use canonical tags to inform Google BOTS a heads-up that more than one page has variants of the content.

4. YOUR META TAGS

Credits To SEMRush

While actual on-page content is a great way to optimize pages to improve site rankings, using the right Meta tags is a big significant. Meta tags are snippets of text, embedded in the source code of your website page, that provide Google BOTS a preview about what the content is all about – be it descriptive content, images or graphics. Google finds difficulty in indexing and ranking a page if a website is missing its Meta tags. This impacts your site rankings.

Fixing the Issue:

You don’t need to be a code junkie to fix Meta Tag issues. A few knowledge in HTML can help in identifying how to set and fix Meta tag issues on your website. For example, writing a compelling Meta description tag helps draw visitors to you site, and that, in turn, could boost your site rankings.

5. ORPHANED PAGES

As a ranking factor, Google “crawl” through each pages on your website based on internal links to each page. Sometimes though, there are “orphan pages” that have no incoming links to them. This may happen due to several reasons, such as old pages still hosted on your site, pages with past promos left online with no links to them, etc. When Google can’t detect a hyperlink to a page, no authority or link juices passes to the page.

Fixing the Issue:

Collate all pages in your website, you can use Screaming frog for this. Compare URLs listed in your log files with a list of all active URL links on your website. You must decide either remove or re-activate orphan pages that don’t match the two list sources.

6. BROKEN LINKS

Broken internal or external links can irritate web crawler when indexing a website. As these digital “auditors” move from page to page, sniffing out links, they assign page ratings. Broken links may also frustrate human visitors too, who may stop visiting your site. And when page user experience drops – so does your site ranking.

Fixing the Issue:

The only way to fix this issue is to use web auditing tools like Google Search Console, Screaming frog or some paid auditing tools like SEMRush and more in order to discover crawl errors.

PROTECT YOUR SITE RANKING

I provide this information with you because many of us underestimate the role of each of these factors. Taking this for granted will definitely damage a website ranking. If you wish to protect and enhance your own ranking, make sure you keep track these common site ranking destroyers. And always remember: You can do it yourself… but in case if you are not sure how to do that – I am here to help!